Stop babysitting DAGs.

Start shipping data.

Airflow handles orchestration. Everything else is on you. Ascend replaces your entire stack with one platform where pipelines build, run, and fix themselves.

Airflow handles orchestration. Everything else is on you. Ascend replaces your entire stack with one platform where pipelines build, run, and fix themselves.

You picked Airflow because you wanted control over orchestration. It's flexible, battle-tested, and open-source. But as your data stack grows, Airflow becomes a project unto itself, and your engineers become its full-time maintainers.

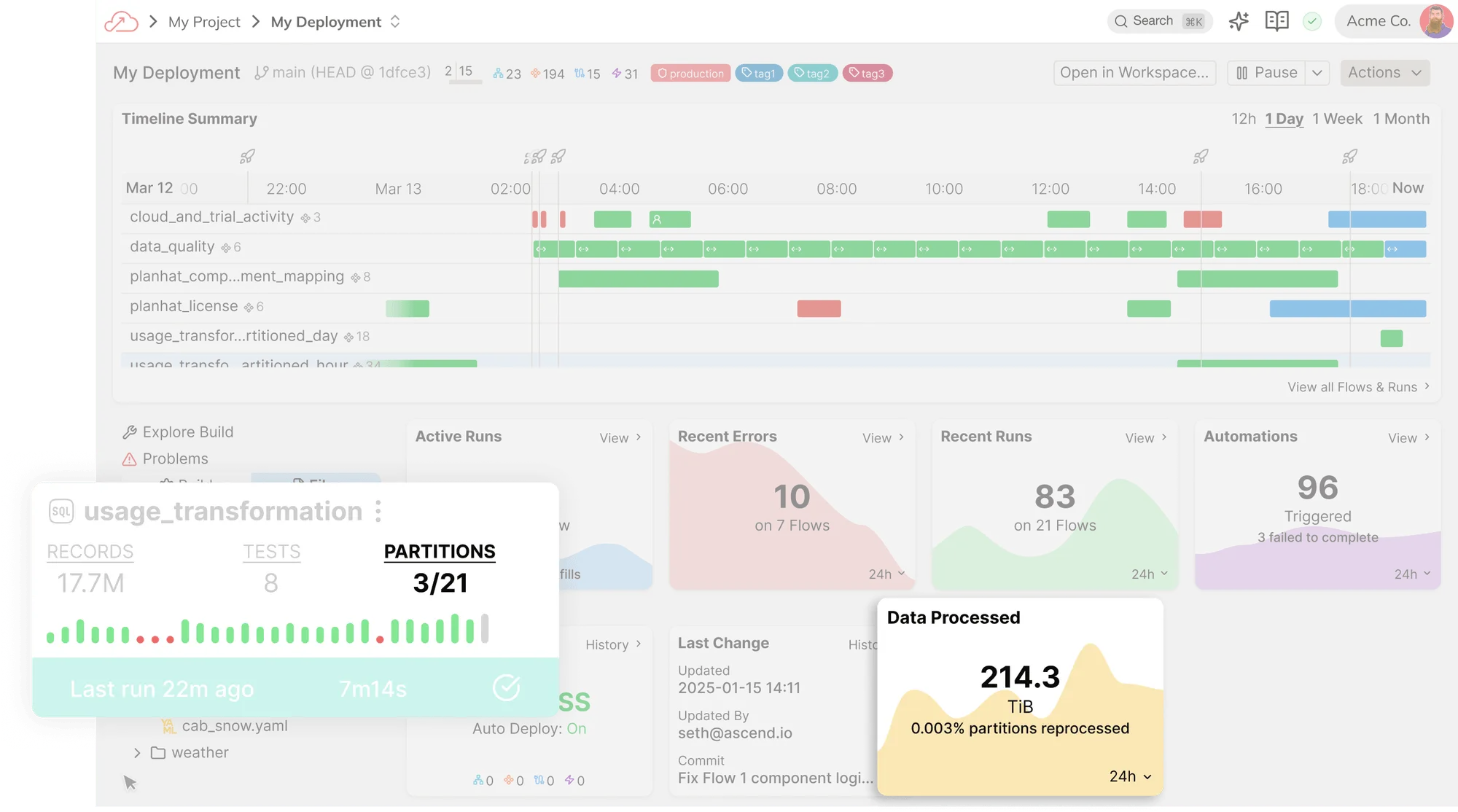

Ingestion. Transformation. Orchestration. Observability. One platform, one metadata layer, not five tools held together with YAML and hope.

A code-first IDE with AI at its core. Write SQL or Python, connect to any source, and push to production with full version control.

Write transformations in the language you already know. Mix SQL and Python in the same pipeline without switching tools or contexts.

Inline code completions, context-aware suggestions, and natural language pipeline creation with Otto, Ascend's agentic copilot.

Flexible connectors and dynamic schema handling for lakes, warehouses, databases, APIs, and legacy systems.

Ascend's DataAware engine replaces brittle cron jobs and hand-coded DAGs with intelligent, event-driven orchestration. Pipelines adapt as your data changes. No manual rewiring required.

Stop hand-coding orchestration graphs. Ascend builds and adapts your DAGs automatically as pipelines evolve, so dependencies never fall out of sync.

Build lightweight agents in markdown and YAML that alert Slack on schema drift, open GitHub issues on failures, or page on-call through PagerDuty.

Built-in CI/CD with automated testing and validation. Schema changes are handled dynamically so upstream shifts don't cascade into downstream failures.

Observability and cost optimization are built into every layer. No plugins, no config, no separate monitoring stack. Everything is visible from the moment your first pipeline runs.

Trace every data flow from source to destination with full change history and auditability. See exactly where data comes from and what it affects downstream.

SHA-based fingerprinting detects exactly what changed. Process only new and modified data, reducing warehouse costs by up to 83%.

Replace Fivetran, dbt, Airflow, and your monitoring stack with a single system. Fewer tools means fewer integration points, fewer contracts, and fewer things to break.

Ascend vs Airflow

Boost in team productivity

I can’t even fathom going back to Fivetran and dbt, where they're only doing a fraction of what you need.

What I just did in an hour would have taken me weeks previously.

Reduction in processing costs

Start your free trial in minutes. No credit card required.

Build pipelines 7x faster with AI that understands your data.

Cut warehouse costs by up to 83% with delta-only processing.

Replace Fivetran, dbt, Airflow, and your monitoring stack.