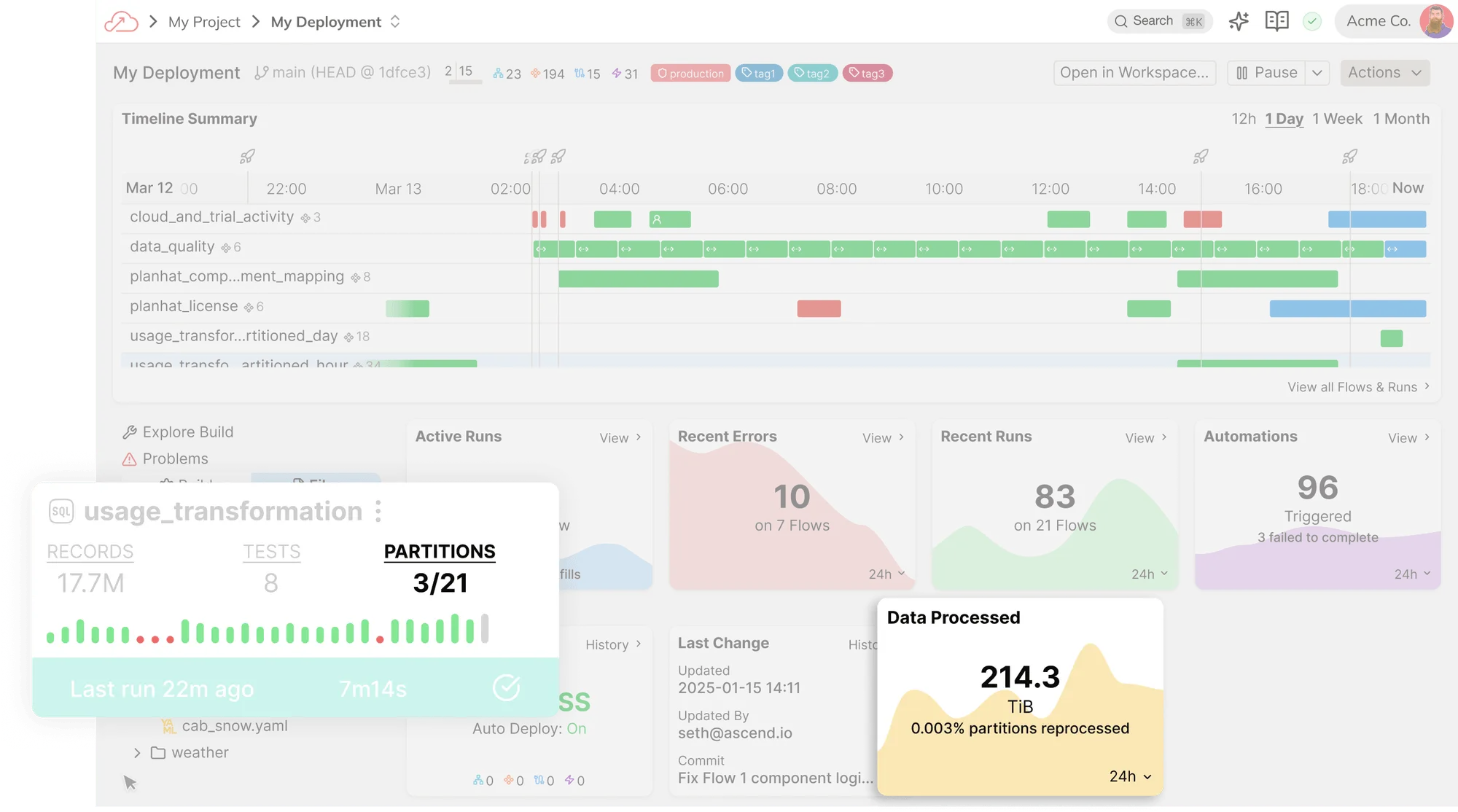

Talend is an enterprise data integration platform that generates Java-based ETL jobs, with capabilities spanning data quality, governance, and connectivity. Since the Qlik acquisition, the product direction has been evolving. Ascend is a cloud-native data engineering platform that covers ingestion, transformation, orchestration, and observability in one system, with AI agents that understand your lineage, dependencies, and costs. If your team is looking to modernize off legacy ETL, Ascend is the next step.